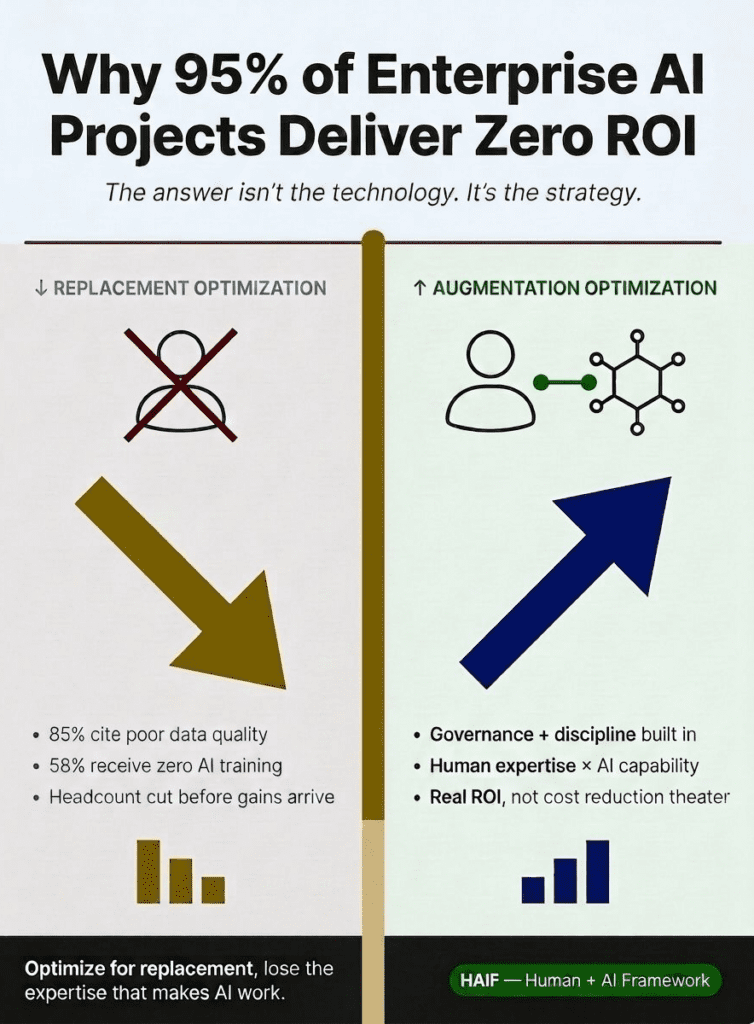

Most enterprise AI initiatives are failing to produce the financial returns leaders expected.

MIT’s GenAI Divide report found that 95% of organizations saw zero return from generative AI investments, even after $30 to $40 billion in enterprise spending. The report also found that only 5% of integrated AI pilots delivered millions in value, while most remained stuck with no measurable P&L impact.

Atlassian reported a similar pattern. Its AI Collaboration Index found that 96% of companies don’t see AI ROI, even though many teams report isolated productivity gains.

That should make every leadership team pause.

Companies have poured money into AI tools, pilots, consultants, internal task forces, and automation platforms. They have held planning meetings, tested new workflows, and encouraged employees to experiment with generative AI. Yet for many of them, the business case still isn’t showing up in the numbers.

The most typical explanation I’ve heard and read is that the technology hasn’t matured enough yet.

Sure, that could account for part of the problem, but it still doesn’t explain the whole pattern.

Many AI tools already work well enough to help people move faster, compare options, summarize information, draft materials, and analyze patterns. The technology has limitations (no one can deny that), but the bigger issue often starts before anyone starts using one or more specific tools.

Too many companies have framed AI as a replacement strategy. As in, a replacement for people.

They want faster output, lower cost, and fewer people to pay. That goal may sound efficient in a boardroom, but it often creates the exact conditions that make AI fail.

When a company starts with replacement, it tends to underinvest in data quality, training, governance, workflow design, and human review.

Those are NOT minor details. They’re the parts of the system that turn AI from a tool into a real strategic asset.

The Strategy Problem Behind the ROI Problem

I queried Google for more viewpoints around this situation. Google’s AI Overview pointed to several familiar reasons enterprise AI projects fall flat.

Poor data quality appears near the top of the list. Many companies also default to generic chatbots rather than vertical AI designed around specific industries or functions.

AI often stays outside of the core workflow, and employees receive little or no training. Meanwhile, the executive team expects immediate returns, while the people closest to the work run into practical limitations every day.

Those issues look separate at first, but they are not.

They all trace back to the same strategic mistake: companies try to insert AI into the business without changing the operating model around it.

If your data lacks structure, AI can’t fix it. It will just make that weakness easier to scale.

Perhaps your employees don’t understand how to work with AI. You can’t just buy them a ChatGPT login and magically turn them into effective users.

And if AI sits outside your core workflows, it may create interesting demos without changing the way the business actually performs.

Leadership should not treat AI mainly as a cost-reduction tool, because their organizations will spend too much time focused speed and volume, while the quality of the work starts to slip.

The failure point isn’t always going to be the model itself. In many cases, the company will learn the hard way why they should have built the conditions needed before AI can produce measurable value.

AI Does Not Repair Weak Workflows

AI works best when it supports a workflow that already has a clear purpose, defined ownership, reliable inputs, and a known standard for quality. It can help people move faster, compare options, identify patterns, and reduce repetitive work.

But it can’t compensate for a process that nobody owns, a data set nobody trusts, or a decision path nobody has defined.

That distinction matters because many companies try to add AI on top of the same broken systems they have tolerated for years.

These weaknesses show up in practical ways:

- The sales team might be working from CRM data with missing fields, outdated stages, or inconsistent notes.

- Marketing could be organizing content around campaigns, instead of a clear taxonomy that AI can interpret.

- Leadership may be relying on reports that different departments define and explain differently.

- Customer success may track account history across several tools, which leaves important context scattered instead of connected.

In the past, experienced people often helped the business work around those issues, because they knew where the gaps were. AI can’t automatically understand that context without oversight.

It will read the available inputs, follow the prompt, and produce an answer that may sound more confident than the underlying data deserves.

That’s why AI can create risk when companies treat it as a shortcut. The tool can make weak inputs look polished, and polished output can create a false sense of confidence.

That doesn’t mean companies should avoid AI. It means they need to stop pretending AI can make up for a lack of operating discipline.

Generic AI Adoption Is Not the Same as ROI

One reason this conversation gets muddled is that many employees do see value from AI at the individual level. The typical user may be able to draft an email faster, organize messy notes, summarize a meeting, brainstorm a campaign angle, or clean up a first draft.

Those gains matter, and they often make people feel like AI has already proven itself. But individual productivity is one small win, and enterprise ROI is a whole different ballgame.

A company can have hundreds of employees using AI each week and still see little movement in revenue, margin, customer retention, sales performance, reporting accuracy, or decision quality. The gap sits between personal tool use and workflow-level improvement.

That is where too many enterprise programs stall.

The company gives people access to AI, but it never defines where AI belongs in the work. It doesn’t identify which workflows should change, who owns the AI-assisted output, which parts require review, and how the company will measure whether the change improved anything that matters.

In that environment, AI activity can look like progress, when people start using tools, different groups begin to experiment, and the execs start hearing promising anecdotes. But if the business is still running on the same processes beneath the surface, these small wins simply can’t scale to enterprise wide value..

AI ROI doesn’t come from usage alone, but from better-designed work.

Training Needs to Match the Stakes

Many companies also underestimate the need for strong training, because generative AI tools feel easy to use. The interfaces invite experimentation, and almost anyone can type a prompt and get a response.

That creates the illusion that training can wait, which is a huge mistake!

Employees need much more than a login and a quick demo. They need to understand which use cases make sense, what inputs improve output quality, where the tool can create risk, what information they should never enter, and when human review needs to happen before anyone acts on the result.

Without that guidance, people will develop a range of habits. Some will use AI well, others will treat the first answer as good enough, and then some will use the tool in ways that create data, privacy, or quality problems.

Most of the time, those issues don’t come from bad intent, but rather, from a company that rolls out access without giving people a practical model for how to use it.

That creates a strange disconnect. Leadership wants enterprise-level ROI, while employees receive consumer-level guidance. That combination can’t hold up for very long.

Replacement Thinking Weakens the System Before AI Can Improve It

The most risky version of this strategy shows up when companies cut headcount before AI gains have materialized.

On paper, that may look like a path to efficiency. In practice, it often removes the people who understand the exceptions, the history, the customer nuance, the data quirks, and the judgment calls that keep the business from making bad decisions.

Those people may not always document everything they know, but their subject matter expertise still protects the work. They catch numbers that look wrong, remember why one segment behaves differently from another, know when a customer situation needs extra context, and understand when a report tells only part of the story.

When a company removes that experience too hastily, AI won’t magically replace it. The remaining team has to move faster with less context, while the tool operates with fewer human checks around the output.

That may sound like a stronger system on face value alone, but in practice, it’s a thinner one.

The company may produce more reports, content, summaries, campaigns, and recommendations, but more output doesn’t guarantee you’ll also enjoy improved performance. In some cases, AI will simply help the organization scale the very problems it should have taken a step back to fix in advance.

The Better Path Starts With Augmentation

This is where your company can get ahead, even if larger competitors have bigger AI budgets.

Large enterprises often move first on tool adoption because they have the money, the technical teams, and the executive pressure to do something visible.

But they also come with more complexity, like disconnected systems, internal politics, approval layers, legacy processes, and pressure to show short-term savings.

That creates an opening for companies that move with more discipline. You don’t need to outspend larger competitors. You just need to out-design them.

Start with augmentation instead of replacement. Rather than asking which people AI can remove from the process, ask where AI can help your best people produce better outcomes, with less wasted effort.

That one shift changes the entire strategy.

AI can help your team analyze information faster, compare options, summarize patterns, pressure-test ideas, improve consistency, and reduce repetitive manual work. But you’ll achieve best results when AI stays connected to the people who understand the business context.

That is the advantage.

You can choose the workflows that matter most, define how AI should support them, train people around practical use cases, and set review rules before the process gets out of control. Smaller and mid-sized companies often have a better chance to do this well, because they can make decisions faster and correct course sooner.

A large company may spend months debating an enterprise-wide AI strategy.

The rest of us can start by improving the workflows that already shape revenue, customer experience, marketing execution, sales follow-up, reporting, or operational speed. We can test the process, refine the rules, and expand what works, without turning every decision into a massive internal program.

That is how you can turn AI into a real operating advantage.

Governance Should Make AI Easier to Use Well

AI governance often gets framed as red tape, which makes a lot of people resistant to it before they even understand the purpose.

Good governance shouldn’t bury people in policy documents. It should give them a practical way to use AI without losing control of the work.

At a basic level, governance answers the questions that every AI workflow needs answered:

- Who owns this output?

- Who reviews it?

- What data can go into the tool?

- What decisions can AI support, but not make?

- When does a person need to approve the result?

- What happens when the output looks wrong, incomplete, biased, risky, or disconnected from the business reality?

Those questions won’t slow the business down when you answer them well. They’ll help you move faster, because people no longer have to guess where the boundaries are.

This is where many AI initiatives fail. The company spends time selecting tools, but not enough time defining the control layer around the work.

Then, AI adoption depends on individual judgment, informal habits, and whatever each team decides to do on its own. That may work for scattered experimentation, but it’s no way to drive repeatable ROI on the effort.

Why I Built HAIF

I built HAIF, the Human + AI Framework, in 2023 because this pattern was already visible. Companies were rushing toward AI adoption, but many of them were treating automation as the goal, instead of asking how humans and AI should work together inside real business processes.

The premise behind HAIF is simple: AI creates the most value when companies define the relationship between human expertise and machine capability, before they try to scale it.

That means the company needs to look at workflow readiness, data quality, role ownership, review rules, approval thresholds, escalation paths, measurement, and ongoing improvement. Those pieces may not sound as exciting as a shiny new AI platform, but they are the parts that dictate whether AI produces business value, or just more activity.

You can buy an expensive AI tool and still fail, if the surrounding workflow lacks discipline.

You can also start with a modest toolset and outperform larger competitors, if you start by building stronger Human + AI operating habits.

That is the point too many leaders miss: The tool matters, but the working model matters more.

The Real AI ROI Question

Most companies are asking the wrong question: “How much work can AI automate?”

That question pushes the decision makers toward replacement, and replacement pushes companies toward cost reduction, volume, and speed, before the company has protected quality, context, or accountability.

A better question is this: “Where can AI help our people create better business outcomes?”

That question points the company in a much healthier direction. It forces leadership to identify the workflows where better information, faster analysis, stronger consistency, or improved decision support would actually matter.

It also keeps human expertise in the system, instead of treating it as an obstacle to efficiency.

AI can improve productivity, help everyone move faster, and reduce low-value manual work.

But the companies that see real ROI won’t get there by removing human judgment from the work. They’ll get there by designing better Human + AI workflows, training their employees, improving the quality of their inputs, and building enough governance to scale what works.

That is the split we are seeing now. Some companies will keep chasing replacement and wonder why the return falls flat.

Others will use AI to strengthen how the business works. Those are the companies that will figure it all out first. Don’t you want to be in this group?

If you need help, reach out to me and we can customize a workflow plan to help you get this right the first time.